If you watch carefully enough, just about anything can be a microphone, as new research from MIT’s Computer Science and Artificial Intelligence Laboratory (spotted by Kottke.org) shows.

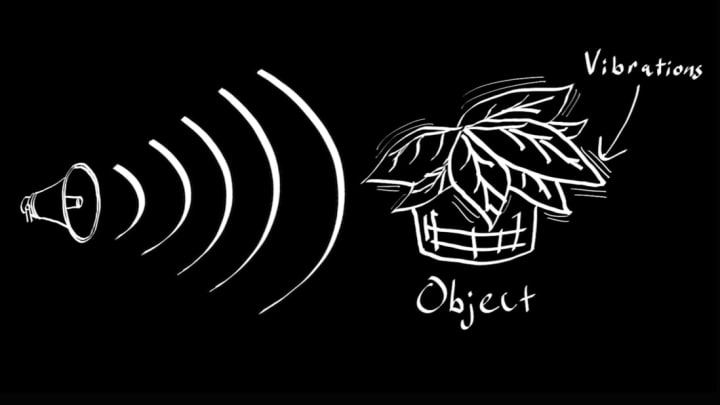

Using high-speed video, researchers created what they call the "visual microphone," a way to recover sound from the minute vibrations of objects. In the video below, they prove their finding using household items like a plant, a bag of chips, and a pair of Apple earbuds.

When sound waves hit an object, it causes nearly invisible vibrations. We can't see them with our eyes, but high-speed video can. The MIT researchers created an algorithm to analyze these vibrations and turn them back into sound. They played "Mary Had a Little Lamb" through a speaker to test their technique, and were able to recover recognizable audio of the song when it was played in the same room as the plant their video camera was trained on. They also tested it with a person speaking the lyrics to the song in the same room as a bag of chips—with the video camera trained on the bag from behind soundproof glass. In perhaps the most impressive example, they trained the camera on a set of earbuds plugged into a computer that was playing audio. When they played their recovered audio of "Under Pressure" for Shazam, the app was able to identify the song.

In each case, the audio wasn't a perfect replica of the original, but it was clearly recognizable. It sounds like audio played through really bad headphones, or from the other side of a wall. Watch the video below to see it in action—you’ll never look at your houseplants the same way again.

[h/t Kottke.org]