In a major step for artificial intelligence, a computer program has finally beat a professional player at the ancient Chinese game of Go.

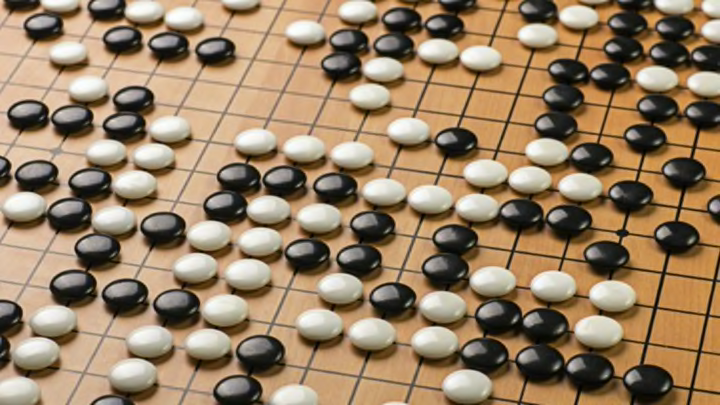

Go has long been considered one of the great challenges facing artificial intelligence. Computers can beat the best of the best at chess, but Go, with its 19 by 19 grid, is significantly more complicated for a program to master. While both sides of a chess game have 20 possible first moves available, the first player in a Go game has 361. There have been computer programs that have succeeded in besting an amateur at the game, but this is the first time one has been able to take down an expert—a feat scientists thought was still a decade away.

London-based researchers at Google DeepMind developed AlphaGo, a program that can absorb and learn information using a structure inspired by the human neural network. Using a method called deep learning, the program was trained by observing how expert Go players played against each other and then by playing against itself. AlphaGo beat Europe’s current Go champion, Fan Hui, in five consecutive games. Hui tells Nature that the program plays more or less like a human opponent, albeit one that behaves kind of strangely.

AlphaGo’s creators report on their winning program in the latest edition of Nature. But while AlphaGo is a major breakthrough for artificial intelligence, you don’t need to worry about robots taking over the world just yet. AlphaGo can only learn how to play Go, and it doesn’t have a lot of applications outside of challenging professional board game players.

[h/t: Nova Next]